This article starts a series of blogs on my ventures into Android land after having used iOS devices for 6 years. I have never been a strong believer in the Apple ecosystem, and my trials to convince myself to MacBooks and MacPros always ended with installing Linux on them, so I guess I wasn t really the ideal target for iOS. Thus, my iOS devices were also permanently in jailbroken state, otherwise I would feel amputated. But as much as I disliked the lock-in and closed environment of the iOS world, it was from the user perspective surprisingly well done and smooth. So it was with a certain level of tension when I finally switched to Linux^WAndroid.

If you don t want to read on, here the preliminary conclusion: Why didn t I do it earlier!

But before we go into details, let me start with my background:

History of my devices

Originally I was a big opponent of smart phones and preferred the Unix-way, one device for one thing. So I had a normal phone and (various)

Palm devices (

Tungsten X,

Tungsten C, and above all my beloved

Handera TRGpro). I loved the Palm world and considered it superior to the then smart phone world, until I came to Japan, where the challenge of a proper input method for Japanese and proper Japanese support posed a big hurdle. The Palm devices had a stick and written input fields, but Japanese input was practically impossible and a huge pain. Searching for a word in Japanese was more hurdle then looking it up in a printed dictionary.

In addition, I needed a phone in Japan, so I plunged into the smart phone world and got myself a iPhone (3g). What a world did open for me: easy typing of Japanese, dictionaries, on-the-fly translation, woooow! And above all, I discovered my most beloved and till now one of my most important programs:

Flashcards Deluxe. Thanks, and I have to say to 80% thanks to this program my Japanese learning speed has accelerated considerably. There is nothing more important for me than getting drilled in a systematic way.

But I derail, anyway, having Flashcard Deluxe on the iPhone within rather short time I had about 10000 or more flashcards created, and moving on to a different architecture (Android) was for quite some time practically impossible without loosing years of statistics and learning, so I renewed my contract after 2 years together with a iPhone 4s. Another two years passed, and these years brought an Android version of Flashcards Deluxe, as well as Dropbox syncing, so I had no excuse anymore to remain in iOS land, wouldn t it be for an iPhone 5s that was passed to me near the end of my forth year, so I again extended the contract for two years.

Finally, after 6 years of iPhone devices, this January I finally decided it is time to switch to Android. After lots of thinking, comparing, and requesting advice from good friends with more experience in the smart phone market I went for a Google Nexus 6p.

Google Nexus 6p

I will not repeat the specs of this phone as they are widely available on the net. My original plan was a Samsung S6, but after consultation with an expert I decided for an original Google phone for better security support. That left me with the option between a Nexus 5x and 6p, and due to prize differences (prizes of mobiles are ridiculously strange in Japan) I went for the 6p instead of the 5x. One point that made the decision for this slight too big device easy was the fact that it uses a great AMOLED display.

Moving the data

Since I was using Google Calendar and Google Contacts already on the iPhone, moving to the Android phone was far less a hassle than I thought. My contacts and events showed up without a hiccup. Most of the usual apps are nowadays available on both iOS and Android, so the most difficult thing was remembering all the passwords to log into the applications again (SNS like G+, FB, Twitter etc). The same is more or less true for messengers of all kinds (Line, WhatsApp, Threema, etc), but here one is advised to check with the respective web site first to make sure one does not loose all of the important data. Line for example is a stupid ***** that deletes all previous chats on the old phone and does not make them available on the new one. WhatsApp can be converted with a special conversion program. Threema, too allows for transfer of ids.

Move of applications

After that came the hunt for replacement applications for those that are not available as is on Android:

Mail

At first like probably everyone I used the shipped GMail program. It might be good for Google Mail accounts, but for anything else it is just a real pain. Thus, I have searched a bit and finally settled (for now) for

K-9 Mail: it is open source, open development, feature rich, and more a hackers type email program, perfectly suited to me.

There is a commercial variant called

K@-Mail that says that it improves the user interface and some usability items as well as features, but I didn t see much of an advantage over the original version (which is completely free) and in fact some of my accounts didn t work at all. So I remain with K9 Mail and I think this is a good decision.

Calendar

Managing Calendars is one of the most important task for me. I have been a fervent supporter of

DateBk4,

DateBk 5, and

DateBk 6 on the original Palm series, and when I left the Palm World it was with great pain that I had to loose DateBk. Not only because it was a simply fantastic calendar program that allowed me to keep track of all my climbing routes, festivities, in a much more advanced way than any other Calendering application, but also because the programmer of the DateBk series is running the

Dewar Wildlife Trust, a Gorilla rescue group and a lot of the money he makes from the app sales is going to rescue Gorillas.

With the switch to iOS this option was gone, and I first used the built-in calendar application (which is so weak) and later and for long time

Pocket Informant Pro. This is a very good program and probably the only one that can compete with DateBk with respect to functionality and usefulness.

During the time of me being locked in in iOS I realized that the world has moved on and a new version of DateBk series for Java was developed, called

Pimlical. First only available on Windows, it became later available also on Android and Linux, too.

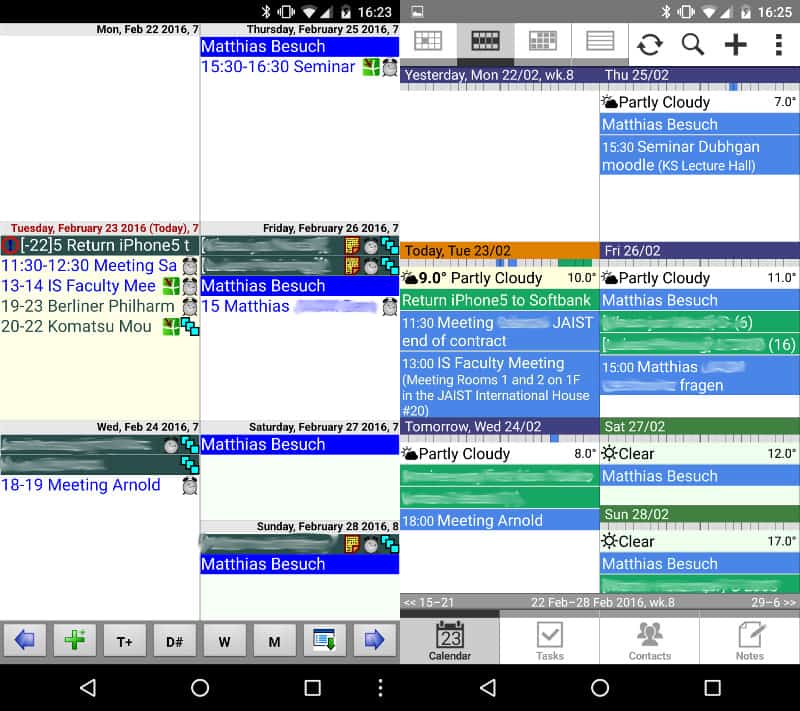

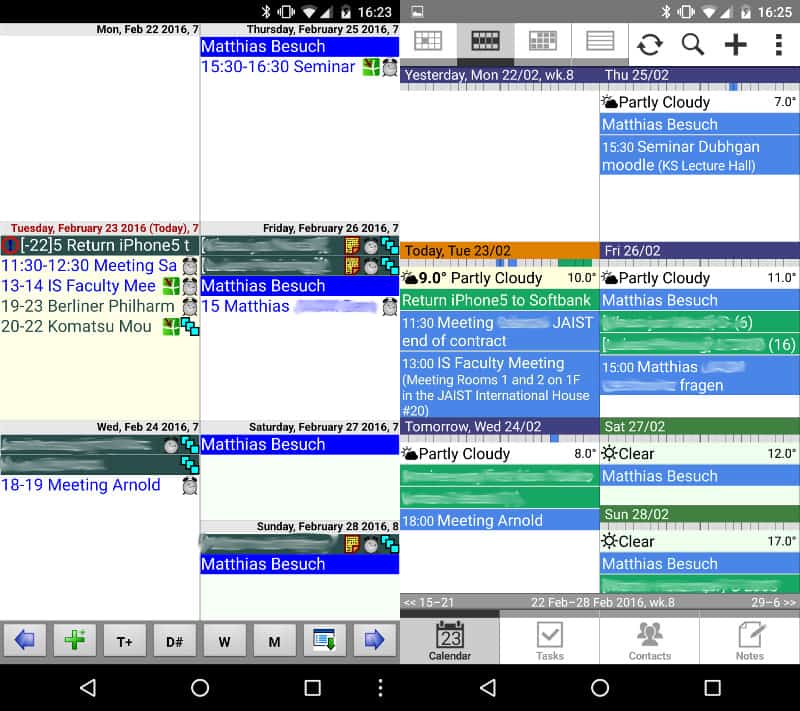

The following screen shot puts Pimlical on the left, and Pocket Informant on the right. I will write a more detailed comparison in future, in short: PInformant is more streamlined and polished, Pimlical has more configuration option. Practically everything can be adjusted to one s need, and in addition there is also a Desktop application that sync either with Google and the phone, or only with the phone if you want to live off the grid.

So nowadays on Android I have both Pocket Informant Pro as well as Pimlical, but after a short time I have now switched practically exclusively to Pimlical.

Notes

Here there is pain HUGE PAIN!!! iOS has an excellent applications for notes, called simply

Notebooks. This little pearl was my work horse for everything (more or less) memorable. From poems and song texts to bus time tables, from PDF to GIFs, from MarkDown to HTML, everything could be saved into Notebooks, displayed, edited, ordered. And above all it had automatic background sync with Dropbox. So I could drop new files into the respective sub-folder of my Dropbox folder and could be sure I have the files available on my phone when I leave for a trip. And there is a huge bag of features that I haven t even tapped into!

Android is unfortunately not on the list of supported architectures of Notebooks. So I searched far and wide, and without any success. There are all kind of notes, flash colors, overly simple, fast and slow, stylish and plain, but none of them did even provide half of the features of Notebooks. None, not even half.

I still hope I might find the ultimate notes application, or even better would be an Android version of the original Notebooks application (but this is not high on the developers todo list), but for now I am in despair

The Rest

As I said, most apps are nowadays available on both platforms, so there is not much more to do than download the respective Android app and log in again. That worked very nice across practically all apps.

Things I don t like (i.e., which are broken!) on Android

Although a very convenient system and perfectly made to fit my taste, there are some things that are a huge pain (and a big shame on Google to not being able to fix that for long time!):

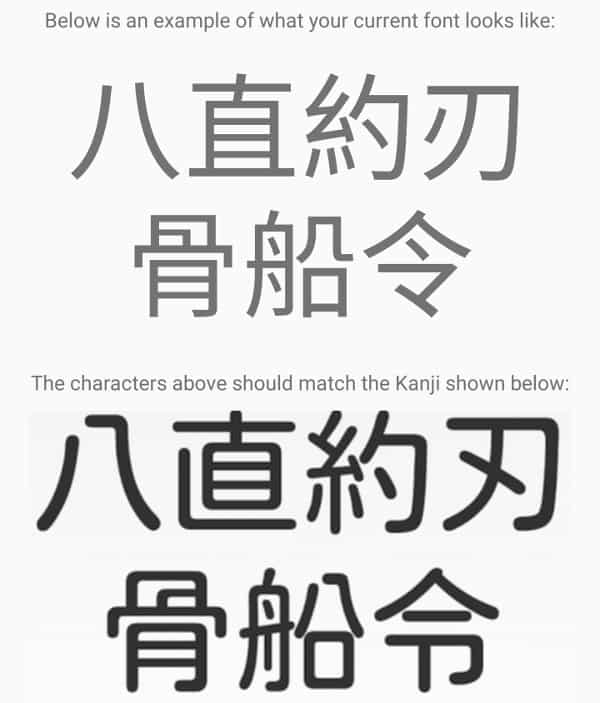

Japanese fonts when the device is in English interface language

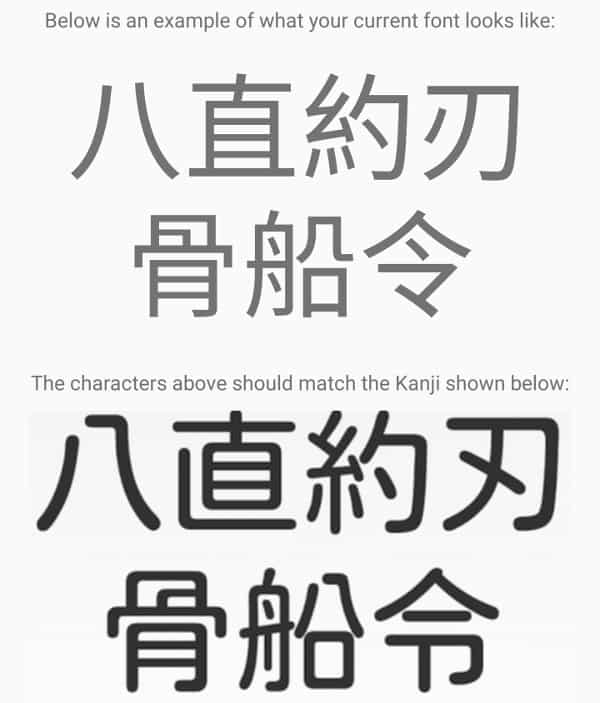

In case you are a foreigner living in Japan and want your Android phone in English, but still read emails, news, etc in Japanese, then Android provides you with the worst, namely Chinese fonts:

This is a well known problem and I have

blogged about fixing the very same problem on Linux (Debian), and the solution is a simple reshuffling in the fontconfig configuration files. There is even an application for it in the Google store,

Kanji Fix, but it needs a rooted device (which I haven t done till now my failure!). I can only hope that Google fixes this completely stupid problem in a future version.

The Me problem

Another of these beasty problems: The Android Contacts application has an entry for Me , which unfortunately, no idea why, cannot be linked with my normal me in the list of contacts. There are reports all over the Internet, strange suggestions, and no real solution. Again, a simple thing that should work but doesn t.

Invisible Images folder in MTP mode

A more annoying problem is that the camera folder under Photos does not show up when connecting the device in MTP mode to my computer, and as consequence me being unable to copy photos from the device to my computer.

The solution I am using at the moment is moving the photos with a file manager to a new folder which is visible during MTP communcation, and copy the photos from there.

But this, too, should be something trivial, but alas, despite a lot of posts on the internet I couldn t find a proper solution.

Google Music

As written

somewhere else, Google Music has switched from 5 star system to up/down system, which is a huge pain and PITA.

Things I do like (or I discovered) on Android

There are some things I haven t been used/tried on iOS they might be possible which I really like:

Yubikey Neo support

I will write about this in a different blog, but nowadays I have my GPG keys on an hardware token (Yubikey Neo) and the application OpenKeychain on Android works nicely with both K9 Mail and via NFC with my Yubikey. That is a great tool!

Bluetooth streaming

Bluetooth on iOS devices was always a bit broken for me, so connecting my phone to my old car radio I needed radio transmitter that was connected to the cable port of the iPhone. With Android I use a Bluetooth Radio device (receives data via bluetooth, and sends music out via radio waves for a car stereo to receive them). Now if my monthly data limit wouldn t be that low

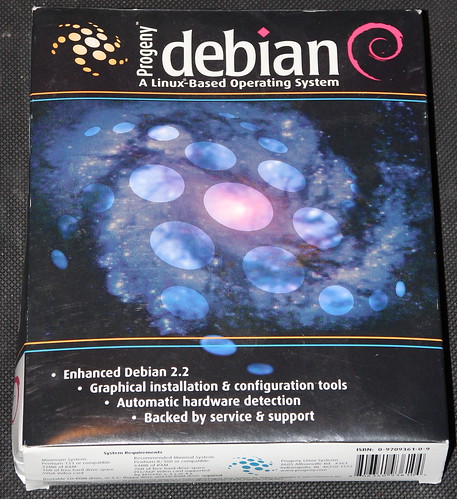

Debian on Android

Yes, you can have a full Debian system running in your terminal on Android. There are several applications providing this feature, and I am rather surprised how smooth it works.

Conclusion

My preliminary conclusion is that the switch to Android at this time was perfectly timed, and from the technological side I should have done much earlier. In future blogs I will discuss particular instances of this transition in more details.

If you have any suggestion for me, in particular for a good notes taking application, please let me know!

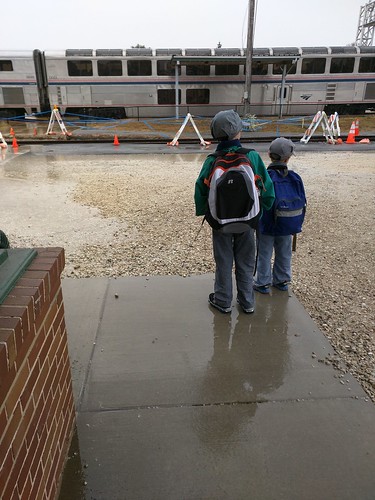

Because, well, what could be more fun than spending a few days in the world s only real Pullman sleeping car, on its original service track, inside a hotel?

Because, well, what could be more fun than spending a few days in the world s only real Pullman sleeping car, on its original service track, inside a hotel?

We were on a family vacation to Indianapolis, staying in what two railfan boys were sure to enjoy: a hotel actually built into part of the historic Indianapolis Union Station complex. This is the original train track and trainshed. They moved in the Pullman cars, then built the hotel around them. Jacob and Oliver played for hours, acting as conductors and engineers, sending their train all across the country to pick up and drop off passengers.

Opa!

Have you ever seen a kid s face when you introduce them to something totally new, and they think it is really exciting, but a little scary too?

That was Jacob and Oliver when I introduced them to saganaki (flaming cheese) at a Greek restaurant. The conversation went a little like this:

Our waitress will bring out some cheese. And she will set it ON FIRE right by our table!

Will it burn the ceiling?

No, she ll be careful.

Will it be a HUGE fire?

About a medium-sized fire.

Then what will happen?

She ll yell OPA! and we ll eat the cheese after the fire goes out.

Does it taste good?

Oh yes. My favorite!

It turned out several tables had ordered saganaki that evening, so whenever I saw it coming out, I d direct their attention to it. Jacob decided that everyone should call it opa instead of saganaki because that s what the waitstaff always said. Pretty soon whenever they d see something appear in the window from the kitchen, there d be craning necks and excited jabbering of maybe that s our opa!

And when it finally WAS our opa , there were laughs of delight and I suspect they thought that was the best cheese ever.

Giggling Elevators

We were on a family vacation to Indianapolis, staying in what two railfan boys were sure to enjoy: a hotel actually built into part of the historic Indianapolis Union Station complex. This is the original train track and trainshed. They moved in the Pullman cars, then built the hotel around them. Jacob and Oliver played for hours, acting as conductors and engineers, sending their train all across the country to pick up and drop off passengers.

Opa!

Have you ever seen a kid s face when you introduce them to something totally new, and they think it is really exciting, but a little scary too?

That was Jacob and Oliver when I introduced them to saganaki (flaming cheese) at a Greek restaurant. The conversation went a little like this:

Our waitress will bring out some cheese. And she will set it ON FIRE right by our table!

Will it burn the ceiling?

No, she ll be careful.

Will it be a HUGE fire?

About a medium-sized fire.

Then what will happen?

She ll yell OPA! and we ll eat the cheese after the fire goes out.

Does it taste good?

Oh yes. My favorite!

It turned out several tables had ordered saganaki that evening, so whenever I saw it coming out, I d direct their attention to it. Jacob decided that everyone should call it opa instead of saganaki because that s what the waitstaff always said. Pretty soon whenever they d see something appear in the window from the kitchen, there d be craning necks and excited jabbering of maybe that s our opa!

And when it finally WAS our opa , there were laughs of delight and I suspect they thought that was the best cheese ever.

Giggling Elevators

Fun times were had pressing noses against the glass around the elevator. Laura and I sat on a nearby sofa while Jacob and Oliver sat by the elevators, anxiously waiting for someone to need to go up and down. They point and wave at elevators coming down, and when elevator passengers waved back, Oliver would burst out giggling and run over to Laura and me with excitement.

Some history

Fun times were had pressing noses against the glass around the elevator. Laura and I sat on a nearby sofa while Jacob and Oliver sat by the elevators, anxiously waiting for someone to need to go up and down. They point and wave at elevators coming down, and when elevator passengers waved back, Oliver would burst out giggling and run over to Laura and me with excitement.

Some history

We got to see the grand hall of Indianapolis Union Station what a treat to be able to set foot in this magnificent, historic space, the world s oldest union station. We even got to see the office where Thomas Edison worked, and as a hotel employee explained, was fired for doing too many experiments on the job.

Water and walkways

Indy has a system of elevated walkways spanning quite a section of downtown. It can be rather complex navigating them, and after our first day there, I offered to let Jacob and Oliver be the leaders. Boy did they take pride in that! They stopped to carefully study maps and signs, and proudly announced this way or turn here and were usually correct.

We got to see the grand hall of Indianapolis Union Station what a treat to be able to set foot in this magnificent, historic space, the world s oldest union station. We even got to see the office where Thomas Edison worked, and as a hotel employee explained, was fired for doing too many experiments on the job.

Water and walkways

Indy has a system of elevated walkways spanning quite a section of downtown. It can be rather complex navigating them, and after our first day there, I offered to let Jacob and Oliver be the leaders. Boy did they take pride in that! They stopped to carefully study maps and signs, and proudly announced this way or turn here and were usually correct.

And it was the same in the paddleboat we took down the canal. Both boys wanted to be in charge of steering, and we only scared a few other paddleboaters.

Fireworks

And it was the same in the paddleboat we took down the canal. Both boys wanted to be in charge of steering, and we only scared a few other paddleboaters.

Fireworks

Our visit ended with the grand fireworks show downtown, set off from atop a skyscraper. I had been scouting for places to watch from, and figured that a bridge-walkway would be great. A couple other families had that thought too, and we all watched the 20-minute show in the drizzle.

Loving brothers

By far my favorite photo from the week is this one, of Jacob and Oliver asleep, snuggled up next to each other under the covers. They sure are loving and caring brothers, and had a great time playing together.

Our visit ended with the grand fireworks show downtown, set off from atop a skyscraper. I had been scouting for places to watch from, and figured that a bridge-walkway would be great. A couple other families had that thought too, and we all watched the 20-minute show in the drizzle.

Loving brothers

By far my favorite photo from the week is this one, of Jacob and Oliver asleep, snuggled up next to each other under the covers. They sure are loving and caring brothers, and had a great time playing together.

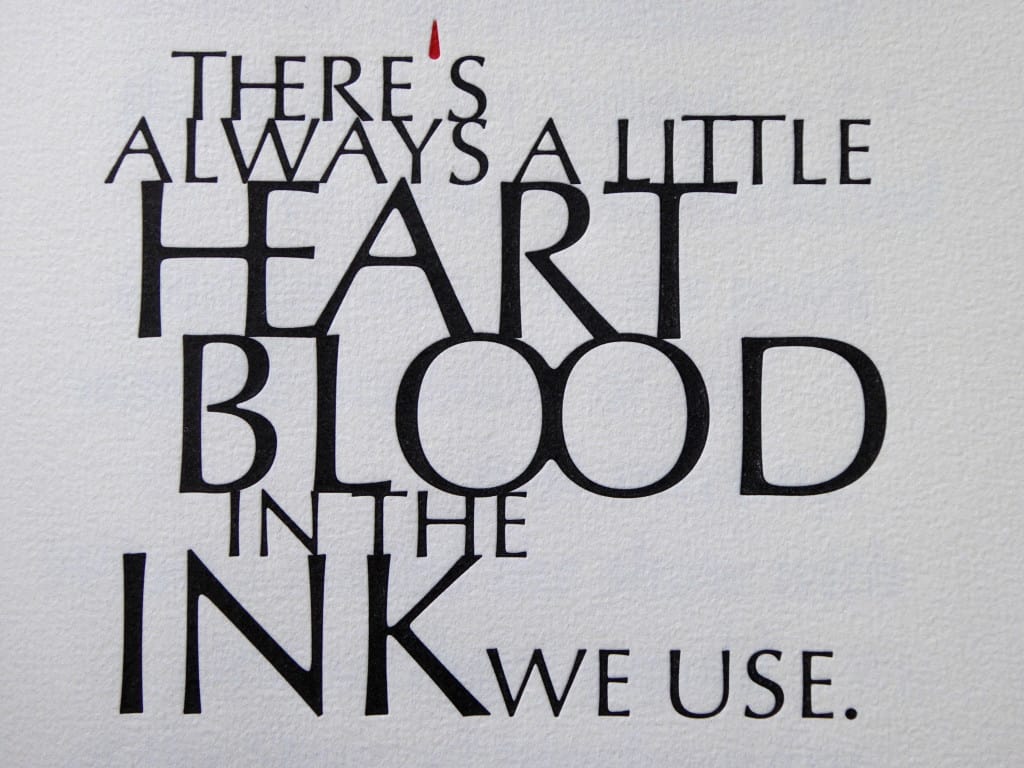

As nearly anyone who's worked with me will attest to, I've long since

touted

As nearly anyone who's worked with me will attest to, I've long since

touted  Debian is quite probably the project that most uses a OpenPGP implementation (that is, GnuPG, or gpg) for many of its internal operations, and that places most trust in it. PGP is also very widely used, of course, in many other projects and between individuals. It is regarded as a secure way to do all sorts of crypto (mainly, encrypting/decrypting private stuff, signing public stuff, certifying other people's identities). PGP's lineage traces back to Phil Zimmerman's program, first published in 1991 By far, not a newcomer

PGP is secure, as it was 25 years ago. However, some uses of it might not be so. We went through several migrations related to algorithmic weaknesses (i.e. v3 keys using MD5; SHA1 is strongly discouraged, although not yet completely broken, and it should be avoided as well) or to computational complexity (as the migration away from keys smaller than 2048 bits, strongly prefering 4096 bits). But some vulnerabilities are human usage (that is, configuration-) related.

Today, Enrico Zini gave us a heads-up in the #debian-keyring IRC channel, and started a thread in the debian-private mailing list; I understand the mail to a private list was partly meant to get our collective attention, and to allow for potentially security-relevant information to be shared. I won't go into details about what is, is not, should be or should not be private, but I'll post here only what's public information already.

What are short and long key IDs?

I'll start by quoting Enrico's mail:

Debian is quite probably the project that most uses a OpenPGP implementation (that is, GnuPG, or gpg) for many of its internal operations, and that places most trust in it. PGP is also very widely used, of course, in many other projects and between individuals. It is regarded as a secure way to do all sorts of crypto (mainly, encrypting/decrypting private stuff, signing public stuff, certifying other people's identities). PGP's lineage traces back to Phil Zimmerman's program, first published in 1991 By far, not a newcomer

PGP is secure, as it was 25 years ago. However, some uses of it might not be so. We went through several migrations related to algorithmic weaknesses (i.e. v3 keys using MD5; SHA1 is strongly discouraged, although not yet completely broken, and it should be avoided as well) or to computational complexity (as the migration away from keys smaller than 2048 bits, strongly prefering 4096 bits). But some vulnerabilities are human usage (that is, configuration-) related.

Today, Enrico Zini gave us a heads-up in the #debian-keyring IRC channel, and started a thread in the debian-private mailing list; I understand the mail to a private list was partly meant to get our collective attention, and to allow for potentially security-relevant information to be shared. I won't go into details about what is, is not, should be or should not be private, but I'll post here only what's public information already.

What are short and long key IDs?

I'll start by quoting Enrico's mail:

This article starts a series of blogs on my ventures into Android land after having used iOS devices for 6 years. I have never been a strong believer in the Apple ecosystem, and my trials to convince myself to MacBooks and MacPros always ended with installing Linux on them, so I guess I wasn t really the ideal target for iOS. Thus, my iOS devices were also permanently in jailbroken state, otherwise I would feel amputated. But as much as I disliked the lock-in and closed environment of the iOS world, it was from the user perspective surprisingly well done and smooth. So it was with a certain level of tension when I finally switched to Linux^WAndroid.

This article starts a series of blogs on my ventures into Android land after having used iOS devices for 6 years. I have never been a strong believer in the Apple ecosystem, and my trials to convince myself to MacBooks and MacPros always ended with installing Linux on them, so I guess I wasn t really the ideal target for iOS. Thus, my iOS devices were also permanently in jailbroken state, otherwise I would feel amputated. But as much as I disliked the lock-in and closed environment of the iOS world, it was from the user perspective surprisingly well done and smooth. So it was with a certain level of tension when I finally switched to Linux^WAndroid.

If you don t want to read on, here the preliminary conclusion: Why didn t I do it earlier! But before we go into details, let me start with my background:

History of my devices

Originally I was a big opponent of smart phones and preferred the Unix-way, one device for one thing. So I had a normal phone and (various)

If you don t want to read on, here the preliminary conclusion: Why didn t I do it earlier! But before we go into details, let me start with my background:

History of my devices

Originally I was a big opponent of smart phones and preferred the Unix-way, one device for one thing. So I had a normal phone and (various)  So nowadays on Android I have both Pocket Informant Pro as well as Pimlical, but after a short time I have now switched practically exclusively to Pimlical.

Notes

Here there is pain HUGE PAIN!!! iOS has an excellent applications for notes, called simply

So nowadays on Android I have both Pocket Informant Pro as well as Pimlical, but after a short time I have now switched practically exclusively to Pimlical.

Notes

Here there is pain HUGE PAIN!!! iOS has an excellent applications for notes, called simply  This is a well known problem and I have

This is a well known problem and I have  Debian on Android

Yes, you can have a full Debian system running in your terminal on Android. There are several applications providing this feature, and I am rather surprised how smooth it works.

Conclusion

My preliminary conclusion is that the switch to Android at this time was perfectly timed, and from the technological side I should have done much earlier. In future blogs I will discuss particular instances of this transition in more details.

If you have any suggestion for me, in particular for a good notes taking application, please let me know!

Debian on Android

Yes, you can have a full Debian system running in your terminal on Android. There are several applications providing this feature, and I am rather surprised how smooth it works.

Conclusion

My preliminary conclusion is that the switch to Android at this time was perfectly timed, and from the technological side I should have done much earlier. In future blogs I will discuss particular instances of this transition in more details.

If you have any suggestion for me, in particular for a good notes taking application, please let me know!

Reaction to Sarah's post about

Reaction to Sarah's post about  VLANd is a python program intended to make it easy to manage

port-based VLAN setups across multiple switches in a network. It is

designed to be vendor-agnostic, with a clean pluggable driver API to

allow for a wide range of different switches to be controlled

together.

There's more information in

the

VLANd is a python program intended to make it easy to manage

port-based VLAN setups across multiple switches in a network. It is

designed to be vendor-agnostic, with a clean pluggable driver API to

allow for a wide range of different switches to be controlled

together.

There's more information in

the

The latest stable release of Mono has happened, the first bugfix update to our 4.0 branch. Here are the release highlights, and some other goodies.

Stable Packages

This release covers Mono 4.0.1, and MonoDevelop 5.9. As promised last time, this includes builds for RPM-based x64 systems (CentOS 7 minimum), Debian-based x64, i386, ARMv5 Soft Float, and ARMv7 Hard Float systems (Debian 7/Ubuntu 12.04 minimum).

Version numbering

From now on, we re going to be clearer with our version numbering scheme. Historically, we ve shipped, say, 4.0.0 to the public internally, there have been a lot of builds on this target branch, all of which get an internal revision number. 4.0.0 as-shipped was in fact 4.0.0.143 internally that was the first 4.0.0 branch release approved of for stable release.

This release is the first service release on the 4.0.0 branch, numbered 4.0.1.44 it ll be officially referred to as 4.0.1 in some places, but isn t the same as 4.0.1.0, which already released on Linux/Windows a while back, to include an emergency bugfix for those platforms.

That was sorta a screwup really. Using the 4-part version removes the ambiguity, rather than having 44 different 4.0.1 s in existence. And we ll aim to be clearer in future about what is alpha, what is beta, and what is final (and what is a random emergency snapshot).

Alpha Linux packages

Want to see things earlier? We ve now got the structure in place to provide Linux packages (and source releases) to mirror what we do on Mac. When we upload a prospective package to our Mac customers, we will automatically trigger builds for Linux too. See http://www.mono-project.com/download/alpha/

Beta Linux packages

See above. s/alpha/beta/.

Weekly git Master snapshots

We already have packages in place for every git commit, which parallel-install Mono into /opt. This is different.

Weekly (or, right now, when I manually run the requisite Jenkins job), the latest Mac build of Mono git master from our internal CI system will be copied to a public location just for you, a source tarball generated, and packages built. See

The latest stable release of Mono has happened, the first bugfix update to our 4.0 branch. Here are the release highlights, and some other goodies.

Stable Packages

This release covers Mono 4.0.1, and MonoDevelop 5.9. As promised last time, this includes builds for RPM-based x64 systems (CentOS 7 minimum), Debian-based x64, i386, ARMv5 Soft Float, and ARMv7 Hard Float systems (Debian 7/Ubuntu 12.04 minimum).

Version numbering

From now on, we re going to be clearer with our version numbering scheme. Historically, we ve shipped, say, 4.0.0 to the public internally, there have been a lot of builds on this target branch, all of which get an internal revision number. 4.0.0 as-shipped was in fact 4.0.0.143 internally that was the first 4.0.0 branch release approved of for stable release.

This release is the first service release on the 4.0.0 branch, numbered 4.0.1.44 it ll be officially referred to as 4.0.1 in some places, but isn t the same as 4.0.1.0, which already released on Linux/Windows a while back, to include an emergency bugfix for those platforms.

That was sorta a screwup really. Using the 4-part version removes the ambiguity, rather than having 44 different 4.0.1 s in existence. And we ll aim to be clearer in future about what is alpha, what is beta, and what is final (and what is a random emergency snapshot).

Alpha Linux packages

Want to see things earlier? We ve now got the structure in place to provide Linux packages (and source releases) to mirror what we do on Mac. When we upload a prospective package to our Mac customers, we will automatically trigger builds for Linux too. See http://www.mono-project.com/download/alpha/

Beta Linux packages

See above. s/alpha/beta/.

Weekly git Master snapshots

We already have packages in place for every git commit, which parallel-install Mono into /opt. This is different.

Weekly (or, right now, when I manually run the requisite Jenkins job), the latest Mac build of Mono git master from our internal CI system will be copied to a public location just for you, a source tarball generated, and packages built. See

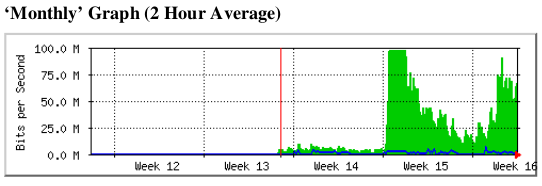

I've had a half-broken temperature monitoring setup at home for quite

some time. It started out with a Atom-based NAS, a USB-serial adapter

and a passive 1-wire adapter. It sometimes worked, then stopped

working, then started when poked with a stick. Later, the NAS was

moved under the stairs and I put a Beaglebone Black in its old place.

The temperature monitoring thereafter never really worked, but I

didn't have the time to fix it. Over the last few days, I've managed

to get it working again, of course by replacing nearly all the

existing components.

I'm using the DS18B20 sensors. They're about USD 1 a piece on Ebay

(when buying small quantities) and seems to work quite ok.

My first task was to address the reliability problems: Dropouts and

really poor performance. I thought the passive adapter was

problematic, in particular with the wire lengths I'm using and I

therefore wanted to replace it with something else. The BBB has GPIO

support, and various blog posts suggested using that. However, I'm

running Debian on my BBB which doesn't have support for DTB

overrides, so I needed to patch the kernel DTB. (Apparently, DTB

overrides are landing upstream, but obviously not in time for Jessie.)

I've never even looked at Device Tree before, but the structure was

reasonably simple and with a

I've had a half-broken temperature monitoring setup at home for quite

some time. It started out with a Atom-based NAS, a USB-serial adapter

and a passive 1-wire adapter. It sometimes worked, then stopped

working, then started when poked with a stick. Later, the NAS was

moved under the stairs and I put a Beaglebone Black in its old place.

The temperature monitoring thereafter never really worked, but I

didn't have the time to fix it. Over the last few days, I've managed

to get it working again, of course by replacing nearly all the

existing components.

I'm using the DS18B20 sensors. They're about USD 1 a piece on Ebay

(when buying small quantities) and seems to work quite ok.

My first task was to address the reliability problems: Dropouts and

really poor performance. I thought the passive adapter was

problematic, in particular with the wire lengths I'm using and I

therefore wanted to replace it with something else. The BBB has GPIO

support, and various blog posts suggested using that. However, I'm

running Debian on my BBB which doesn't have support for DTB

overrides, so I needed to patch the kernel DTB. (Apparently, DTB

overrides are landing upstream, but obviously not in time for Jessie.)

I've never even looked at Device Tree before, but the structure was

reasonably simple and with a